Every time we include a measurement into our assessment and update the probabilities of our hypotheses, we make a prediction that a specific hypothesis corresponds to the actual values of the coins. Our confidence in that prediction corresponds to the probability. If we are doing well, then when we have 100% confidence in a hypothesis, we should have the right answer 100% of the time. Likewise, about half of the hypotheses we assign a 50% probability should, in the long run, be correct. In order to test our predictive skill, then, we just need to determine if predictions to which we give n% confidence are correct about n% of the time.

As our probabilities are usually expressed as real values with many decimal places (ie, .25385732605), it is improbable that any given probability will have enough samples for us to get a statistical measure of accuracy. In this case, we need to 'bin' our hypotheses via their confidence values. Ie. for confidence values between .4 and .45, we should get at least 40% correct (probably closer to 42.5%).

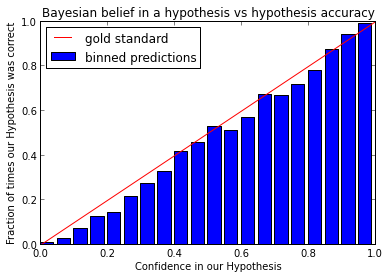

After 500 runs of our simulation, saving the best hypothesis after each new datapoint (representing about 42,000 predictions) we can put these into bins and plot how frequently a prediction falling into the nth bin is correct:

By and large, we do pretty well. This chart shows our estimates to be correct on average 1% of the time fewer than expected, which may well be within the noise due to discretization and limited samples. As we can see in the next chart, the bins have far from uniform distribution of points:

With more data-points in the lower bins, we may see that the estimate confidence becomes more aligned with estimate accuracy.